International organizations that want to reach different user groups face challenges:

- translating content

- Maintaining language versions

- Duplicating websites

- Managing documents multiple times

The workload multiplies with each additional language.

The real challenge lies not only in the translation itself - but in maintaining consistent content in the long term.

Because knowledge is constantly changing.

The classic model: language as content structure

Traditionally, multilingualism is solved via content:

- German → own content

- English → own content

- Turkish → own content

- French → own content

Every language required:

- own pages

- own documents

- own updating

- own quality assurance

The result is well known: Versions drift apart, content becomes outdated or is only partially maintained.

Language becomes an organizational burden.

The technological change of perspective

Modern AI systems - such as those used in the rms AI Suite - solve this problem not through translation, but through semantic understanding.

The knowledge base no longer needs to be multilingual.

Our products make it possible for users to ask questions in their own language - while the underlying content still only needs to be maintained in a single language.

A query can be made in:

- German

- English

- Turkish

- Spanish

- French

- or any other language supported by the language model and the AI Suite.

The response is automatically provided in the same language.

Without additional content maintenance.

Without translation workflow.

Without parallel websites.

Without translated documents.

AI does not understand language, but meaning

The key lies in what is known as semantic understanding.

Modern language models do not simply convert texts or translate them in the traditional way.

Instead, each query is converted into a mathematical representation of meaning - known as embedding.

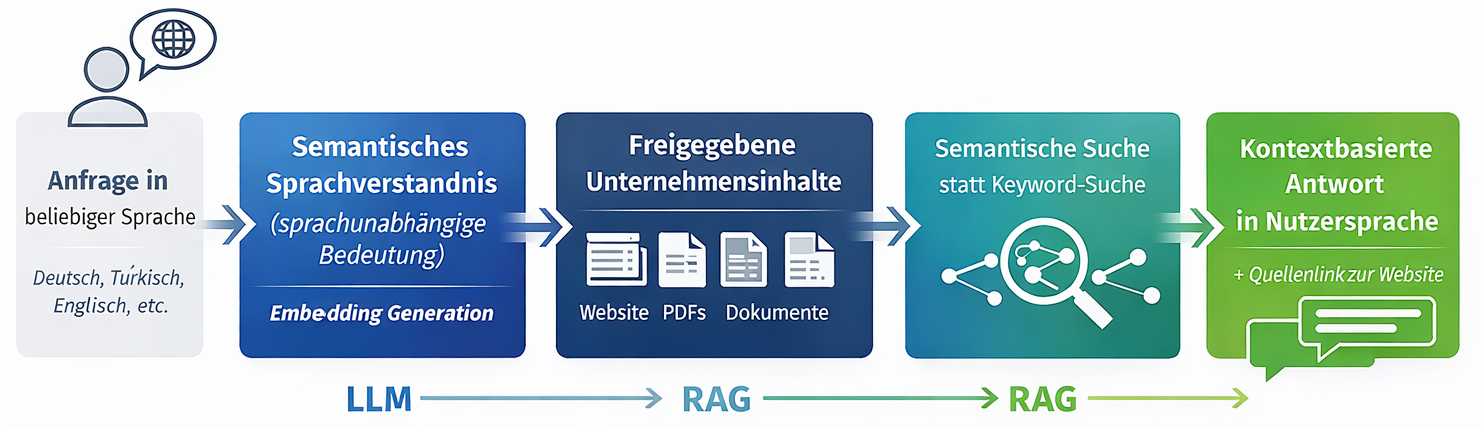

Text → Meaning → Knowledge matching → Answer

This means that statements with the same meaning are close to each other - regardless of language or wording.

For example:

- "What do you do?"

- "What do you do?"

- "Ne yapıyorsunuz?"

are technically interpreted almost identically.

All solutions in the rms AI Suite - in particular:

work according to the principle of Retrieval Augmented Generation (RAG). Two components perform clearly separate tasks.

The LLM (Large Language Model)

The language model is responsible for

- automatically recognizing the language

- Understanding the user question

- Interpretation of context and intention

- Formulating a natural answer

However, the LLM does not generate business knowledge.

The RAG system

This is the decisive difference to classic AI systems.

RAG combines the language model with the real knowledge base:

- Website content

- PDFs

- documentation

- knowledge platforms

- etc.

The system searches the content provided and provides the language model with the relevant context.

The process:

Our rms AI Suite does not hallucinate. It works with existing information; if there is none, it outputs it.

The most important point: content becomes independent of language

This is precisely the biggest practical advantage of our AI Suite:

Users can ask questions in any supported language - even if the knowledge base only exists in one language.

In concrete terms, this means

A website maintained in German can:

- Answer questions in English

- Explain content in Turkish

- summarize information in French

without these languages ever having been edited.

The content remains centralized. The language adapts dynamically to the user.

Multilingualism becomes an access layer

- In the past: create content multiple times.

- Today: Provide content once - make it accessible regardless of language.

Our AI products act as an intelligent mediation layer between people and knowledge.

Navigation is becoming less important. Dialogue becomes the user interface.

A practical example

A user makes a request in Turkish:

"Ne yapıyorsunuz ve sizi nerede bulabilirim?"

Although all content is only available in German, the AI chatbot

- an understandable answer in Turkish

- based on existing content

- including a reference to the original source

The knowledge base remains unchanged.

From technology to practical entry

The underlying technologies - large language models, semantic embeddings or retrieval architectures - initially appear complex.

However, the application reveals a pragmatic approach.

Organizations do not have to create new content or rebuild existing systems.

The rms AI Suite uses existing content and simply adds a new level of interaction.

Whether via AI chatbot, AI Search or AI Assistant:

Users receive answers in the language in which they ask - without the knowledge base having to contain this language.

Many organizations deliberately start small, for example with an AI assistant on the website, and then expand its use step by step.

Not as a transformation project.

But rather as a natural evolution of digital information systems.

AI as a new interface between people and knowledge

The real innovation is therefore not in generating new content.

It lies in making existing knowledge accessible:

- context-related

- dialog-oriented

- language-independent

- comprehensible

Or to put it another way:

Content no longer has to be understood everywhere.

It is enough if the AI understands it.

Conclusion

Large language models generate language. RAG systems provide context.

Only the interaction of both components enables reliable, multilingual AI responses - without multilingual content maintenance.

The rms AI Suite turns this into a practical approach:

One knowledge base. Every language. Every question.