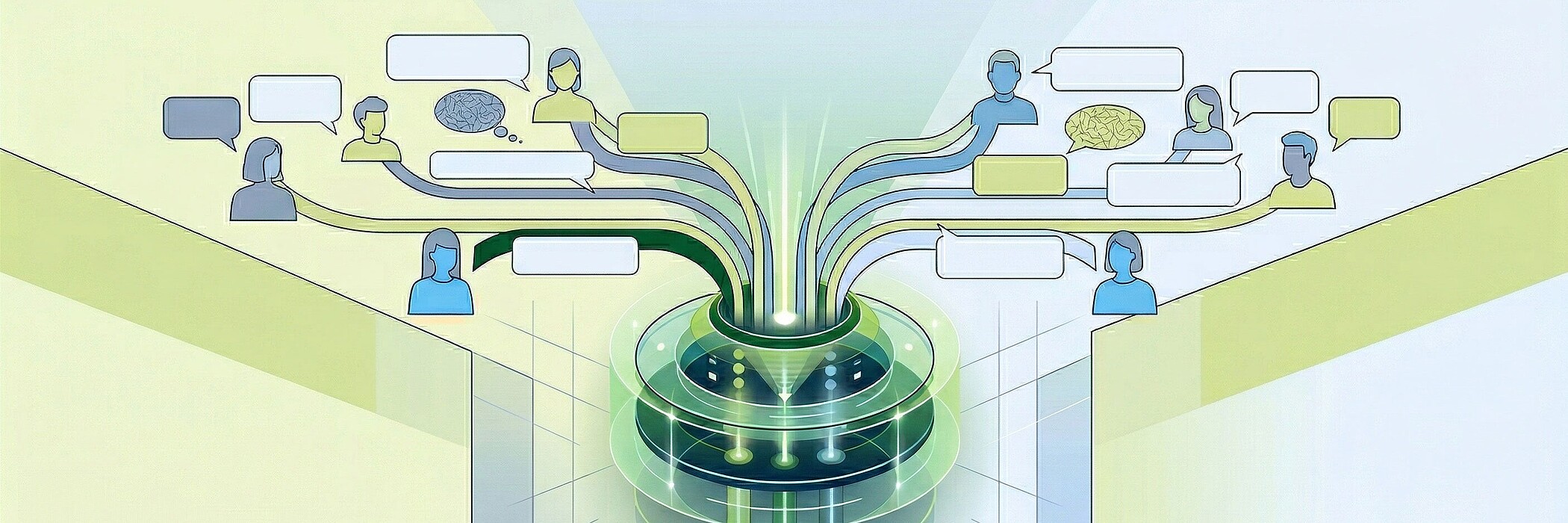

Today we are further ahead. Thanks to large language models (LLMs) and agent architectures, we no longer build rigid decision trees. We use the LLM as an intelligent "dispatcher" that understands what the user really wants - and then controls specialized actors in a targeted manner.

The new standard: the LLM dispatcher as gatekeeper

Intent recognition used to be simple keyword matching. Today it is semantic understanding. In our architecture, we first send every query to an LLM. This is equipped with precise prompts for intent recognition (intents). If a user writes: "Can we have a chat?" or "I need an appointment", the LLM immediately recognizes the contact_request or appointment_booking intent. The special feature: It doesn't need an exact keyword; the LLM understands the context.

The architecture: when the agent takes the wheel

As soon as the system recognizes a specific intent, the mode changes. The chat is handed over to a specialized agent.

- Data collection & dialog: The agent now knows that it is pursuing a goal (e.g. making an appointment). He specifically asks for missing information: "Gladly, on which day is it convenient for you?"

- Action (function calling): The agent is not just a text generator, it is capable of action. It accesses "tools" to call external actors:

- Sending emails via API.

- Entering appointments in the calendar backend.

- Database updates in the CRM.

- The return: As soon as the mission is completed (the appointment is set, the email is sent), the agent sends the word back to the RAG system.

Back to basics: RAG as a source of knowledge

If there is no specific transaction intent (such as "book appointment") or the agent has completed its work, the RAG system (Retrieval Augmented Generation) takes over again. This is where the vector database (such as Chroma DB) comes into play. It serves as the "long-term memory". Instead of fobbing the user off with "I don't know", the system now searches through documents, manuals or FAQs to provide well-founded answers based on internal data.

Backend processes: From intention to action

The process flow at a glance:

| Step | Action | Action |

|---|---|---|

| Input | User asks a question. | Submits a request (no matter how formulated). |

| Dispatching | LLM | Intent recognition via prompting (booking, support, info?). |

| Handover | Agent | Takes over the chat if an intent has been recognized. |

| Execution | Backend | Agent collects data and uses tools (mail, calendar, etc.). |

| Return | RAG (Chroma DB) | After completion, the knowledge-based search takes over again. |

Why this separation is the key to success

Why do we send the user to the agent first and then back to the RAG?

- Precision: An agent that specializes in "appointments" does not hallucinate. It follows a protocol until all the data is available.

- Ability to act: Pure RAG systems can only respond. Only through the agent handover does a chatbot become a real digital employee that completes tasks.

- Natural flow: For the user, it feels like a single, fluid conversation. They don't notice that they are switching between intent recognition, specialized logic and vector search in the background.

Conclusion: The future is "Agentic"

We are no longer building systems that only generate text. By combining LLM-based routing, action-oriented agents and efficient vector databases such as Chroma DB, we create solutions that think for themselves. We build digital employees who not only know where the information is, but also how to use the right tool from the backend toolbox.