AI Solutions for Solarize Energy Solutions GmbH

AI-supported efficiency for decentralized energy supply

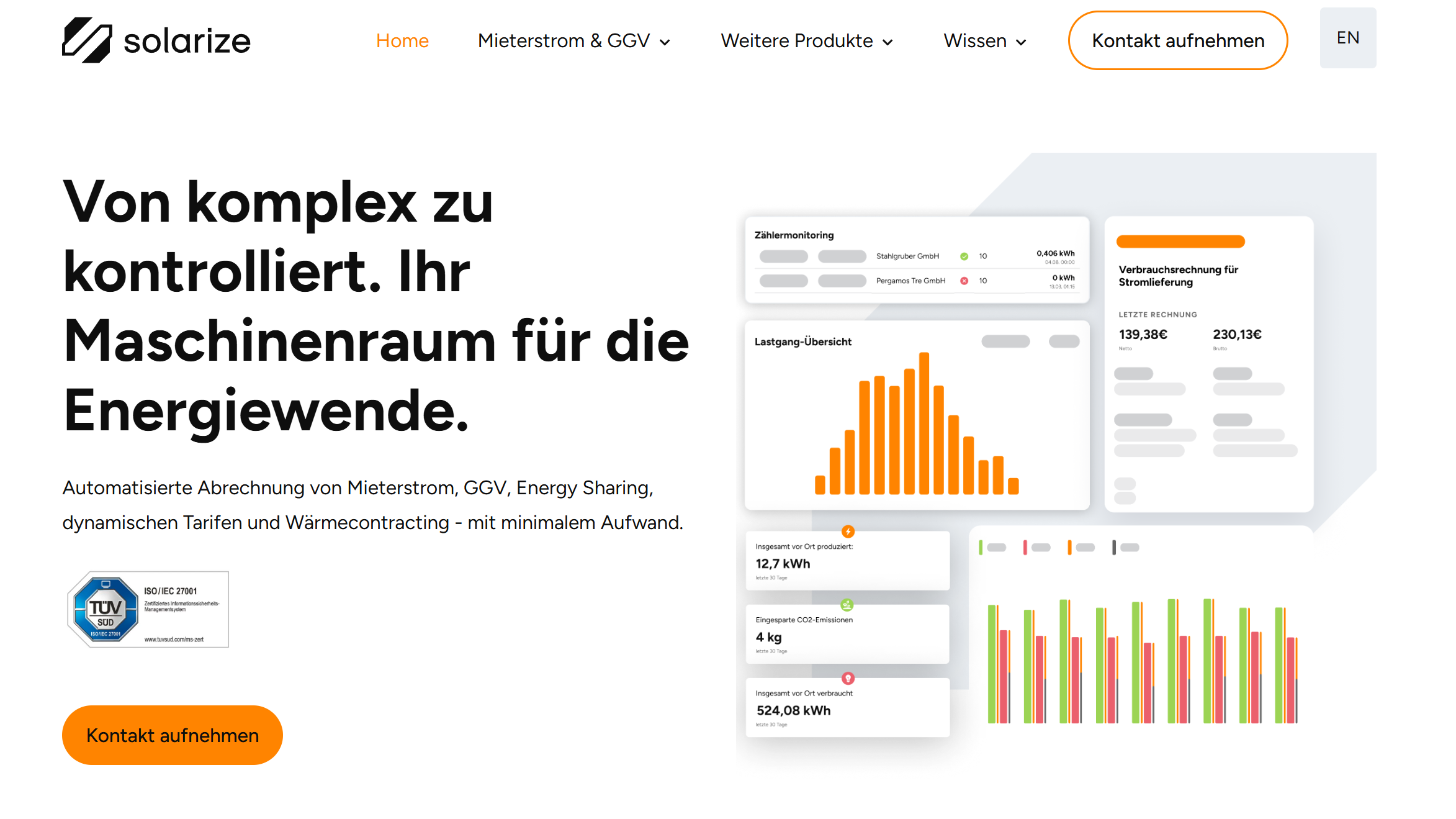

Solarize is a pioneer of the energy transition. With its innovative meter-to-cash software-as-a-service, the company enables the implementation of complex tenant electricity models. Their vision: a local energy supply - simple, fast and everywhere. In order to optimize both internal processes and the customer experience, we supported Solarize with tailor-made AI solutions based on state-of-the-art RAG technology.

The challenge

In a highly complex market such as decentralized supply models, there is an enormous amount of knowledge - from regulatory requirements to technical details. The challenge was to make this knowledge immediately usable for both employees and interested parties, without manual search processes in static documentation.

Our solution: a three-tier AI ecosystem

We have created three central touchpoints for Solarize that are based on a shared intelligent data infrastructure:

- Internal support bot (Knowledge Hub/Slack)

To increase internal efficiency, we developed a support bot that is directly integrated into the company's internal communication platform. The bot accesses Solarize's entire internal knowledge base. Employees can ask complex questions and receive precise answers, including sources, in a matter of seconds.

Benefit: Massive time savings for the team and faster onboarding processes. - External support chatbot

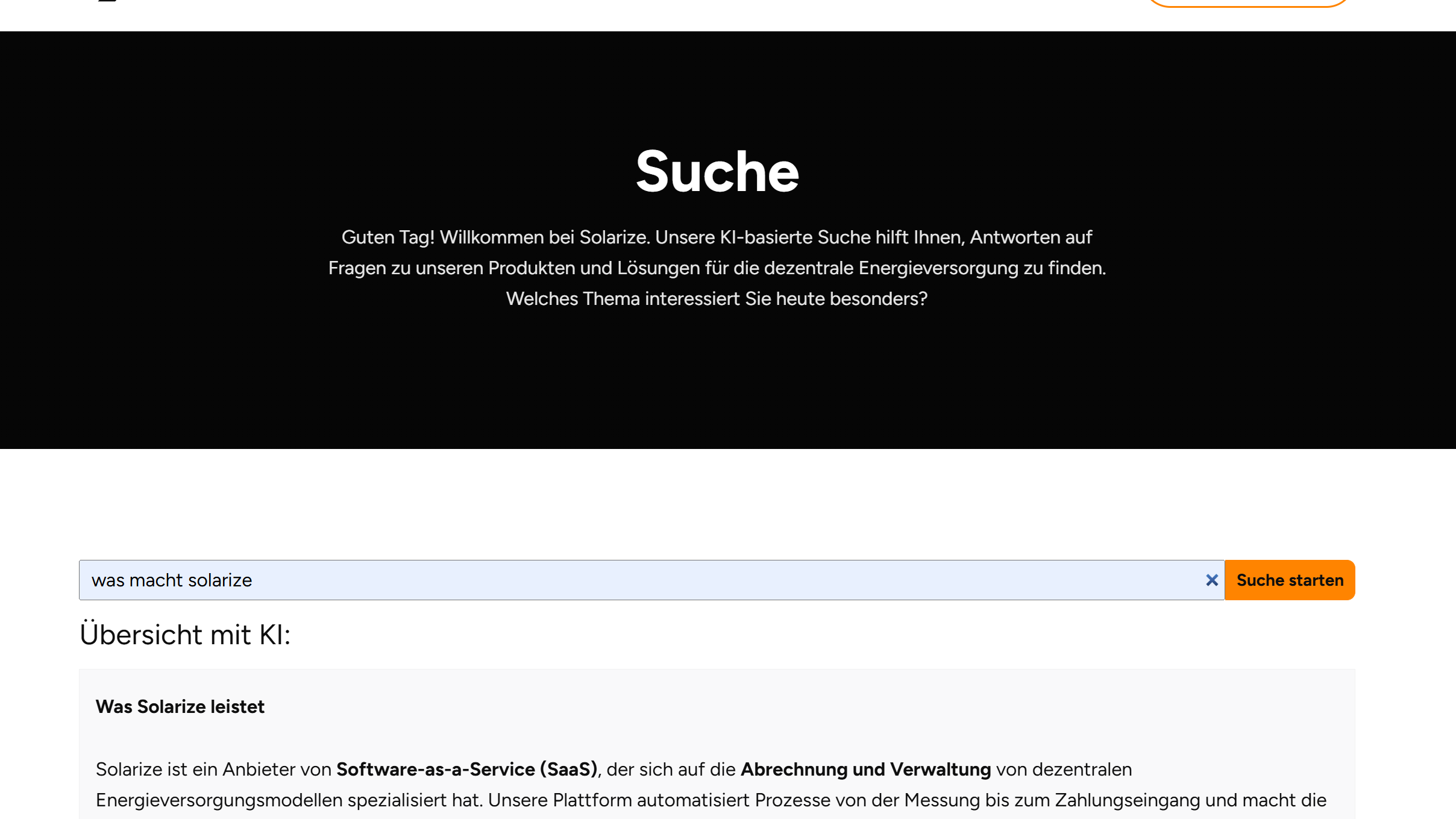

We have implemented an intelligent chatbot that answers technical and administrative questions about the SaaS solution for anyone interested in finding out more about the Solarize solution. It acts as a first point of contact and makes answers to frequently asked questions easily accessible. - Intelligent AI website search

Instead of a classic, keyword-based search, we have integrated an AI search on the website. This understands the intention (semantics) behind users' search queries and provides precise answers instead of just lists of links.

The technology behind the success: RAG & vector databases

At the heart of all three solutions is a RAG architecture (Retrieval Augmented Generation). In contrast to conventional AI models, RAG accesses a specific knowledge pool in real time. This is how it works technically:

- Vector database: all documents, instructions and knowledge articles are converted into vectors (mathematical representations of content) and stored in a special database.

Semantic retrieval: when a query is made, the system searches for meaning rather than words. - Validated answers: The LLM (Large Language Model) generates the answer exclusively on the basis of the facts found in the vector database. This minimizes the risk of "hallucinations" and ensures that the answers are technically correct.

- Data security: The contractual framework explicitly excludes the use of data for language model training purposes. In addition, an internal control body ensures that no sensitive information is transmitted to the LLM.

The result

By implementing the AI solutions, Solarize was able to drastically reduce the hurdles involved in obtaining information. The combination of sustainability and cost-effectiveness that Solarize is driving forward in the energy world is now also reflected in its digital infrastructure: efficient, intelligent and future-proof.

"With the new AI infrastructure, we are making our concentrated expert knowledge immediately accessible to our stakeholders - a decisive lever for scaling the energy transition."